- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Kruti dev font photoshop

- Best hacking sites for beginners

- 2020 forest river wildwood x lite 263bhxl

- Gta liberty city cheats ps3 flying cars

- Anno 1503 faq

- Windows 10 vmware workstation 11

- Arduino 1-8-5 won-t start

- Mlb the show 17 sale

- Haber truck and trailers

- Load samples into fl studio trial

- The sims 4 latest

- Intel extreme graphics 2 windows 95

- X mirage 2-3-9 key

- Difference with crazytalk 7 and crazytalk 8

- Best tv calibration disc

- Toy story 3 andy

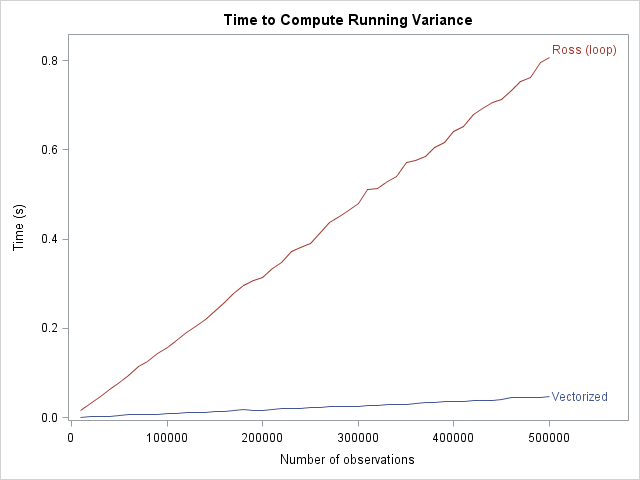

In image processing and computer vision, smoothing ideas are used in scale space representations. One of the most common algorithms is the " moving average", often used to try to capture important trends in repeated statistical surveys.

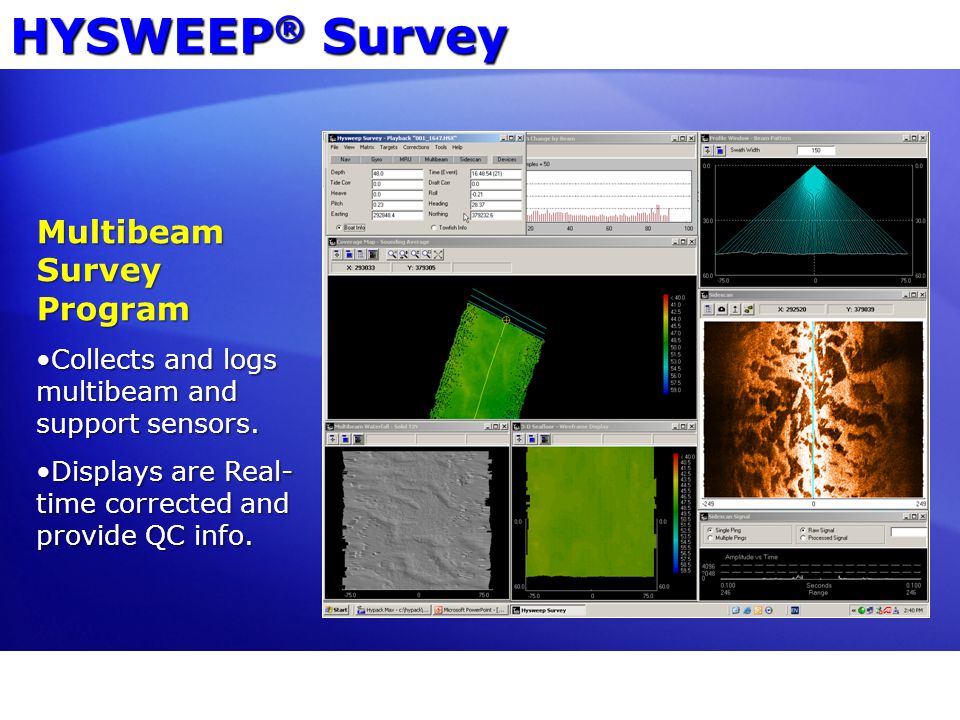

#MLB THE SHOW 17 SALE SERIES#

In the case of simple series of data points (rather than a multi-dimensional image), the convolution kernel is a one-dimensional vector. Thus the matrix is also called convolution matrix or a convolution kernel. The operation of applying such a matrix transformation is called convolution. In the case that the smoothed values can be written as a linear transformation of the observed values, the smoothing operation is known as a linear smoother the matrix representing the transformation is known as a smoother matrix or hat matrix.

Curve fitting will adjust any number of parameters of the function to obtain the 'best' fit. smoothing methods often have an associated tuning parameter which is used to control the extent of smoothing.the aim of smoothing is to give a general idea of relatively slow changes of value with little attention paid to the close matching of data values, while curve fitting concentrates on achieving as close a match as possible.curve fitting often involves the use of an explicit function form for the result, whereas the immediate results from smoothing are the "smoothed" values with no later use made of a functional form if there is one.Smoothing may be distinguished from the related and partially overlapping concept of curve fitting in the following ways: Many different algorithms are used in smoothing. Smoothing may be used in two important ways that can aid in data analysis (1) by being able to extract more information from the data as long as the assumption of smoothing is reasonable and (2) by being able to provide analyses that are both flexible and robust. In smoothing, the data points of a signal are modified so individual points higher than the adjacent points (presumably because of noise) are reduced, and points that are lower than the adjacent points are increased leading to a smoother signal. In statistics and image processing, to smooth a data set is to create an approximating function that attempts to capture important patterns in the data, while leaving out noise or other fine-scale structures/rapid phenomena. For other uses, see Smoothing (disambiguation). This article is about a type of statistical technique for handling data.